When several people work on the animation project in different places, the project sources are usually synced between them using the cloud services. Today I would like to share the workflow we use for that.

First of all, as you probably know, for our projects we use Remake build system, which allows to separate sources from the rendered data. Rendering data could be produced (and updated) anytime from the sources, so it’s quite logical that we need to sync sources only, which dramatically reduces the bandwidth usage.

The rendered data always reside in the «render» subdirectory inside of the project root. So we need to sync everything except this directory. Many cloud sync services allow to exclude certain directories from sync, but we usually go with different approach, which is service-independent.

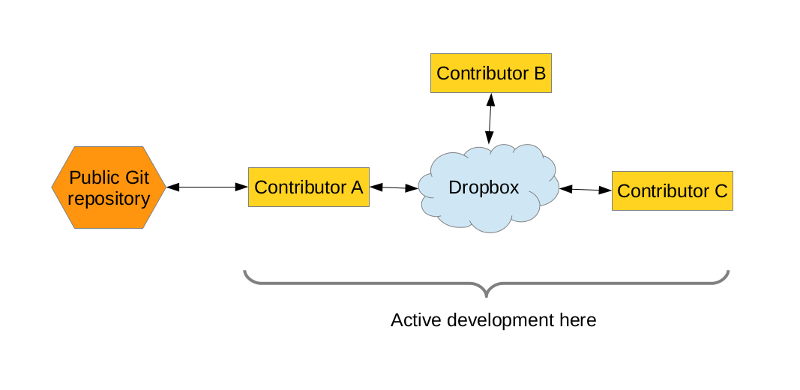

But first, let me clarify one thing: we use Git repositories when we publishing our sources, but we don’t use git repositories for day-to-day sync. The day-to-day sync includes transfer of much more data than you would like to have published in the final project, there are many junk changes, which we don’t want to appear in the commit history, and some of the data of it not intended to be published for wide audience at all. So, what I want is to draw the difference: «keeping revision history» and «keeping project copies in sync» are two different tasks. The full project repository is synced using some service, available for core collaborators only. At the same time, one responsible person (which is generally me) selectively commits project sources to git repository on regular basis. That’s the schema.

Of course, it’s possible to use Git repository as sync solution, but that generally should be a different repository than the one where you keep your revision history (if you do).

At the moment we use Git for revision history and Dropbox as the sync solution (I guess I have to prepare for zealots flow ^__^). Yes, we really would like to use some Git-based solution for sync as well (like SparkleShare or Git-Annex), but that implies having private git repository with a really much space available. We just don’t have it.

OK, let’s get back to our workflow. I will explain it using the Dropbox as example, but such approach should work with any other cloud syncing service.

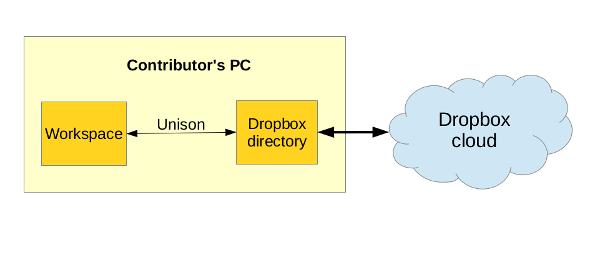

Generally we have one project stored in two places:

- ~/workspace/projects/foo/ — this is a copy to work with.

- ~/Dropbox/projects/foo — this is a copy synced to the cloud.

Those two directories are synced using the Unison tool.

Unison allows to exclude files from sync using the very flexible rules. For example, my unison profile looks like this:

root = /home/zelgadis/Dropbox

perms = 0

path = projects

ignore = Path {.dropbox}

ignore = Name {render}

ignore = Name {.git}

ignore = Name {snapshots}

ignore = Name {packs}

ignore = Name {,.}*{.blend1}

ignore = Name {,.}*{.blend2}

ignore = Name {,.}*{.doc#}

ignore = Name {Makefile}

ignore = Name {,.}*{.pyc}

That will tell Unison to ignore «render» directory (and actually any file with such name) in any place.As you can see, also a some other stuff is ignored.

According to that schema, contributor works with the project in the ~/workspace/projects/foo/ directory. When he wants to get his changes synced, he just launches unison and syncs his changes into Dropbox directory. As soon as files get into Dropbox directory, Dropbox daemon syncs them to the cloud.

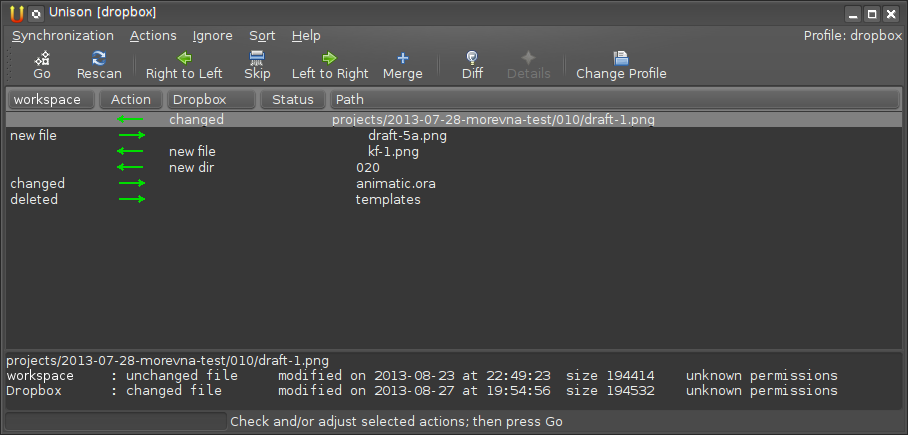

Another good thing is that Unison shows all changes before the sync and allows to make selective synchronization. So you always can see what was changed by other contributors and control the changes made. This is «think before you sync» concept and I like it a lot.

9 ответов

Is there a free replacement for dropbox that we can use.

Maybe http://libreplanet.org/wiki/Group:SyncReplacement

@Tobias:

I’ve looked at the link and it looks like nothing really developed there yet.

As a free Dropbox replacement I consider SparkleShare (http://sparkleshare.org/) or Git-Annex (http://git-annex.branchable.com/assistant/) — both tools are mentioned in my post above. Both are cool. But I have explained why we cant use them (at this moment) — we have no git repository with enough capacity to store our private synced data.

On free replacements, you could use ownCloud and either use the free 1 GB that is offered by ownDrive (https://owndrive.com/) or purchasing more storage from the ownCloud servers themselves (https://owncloud.com)

Hey, right — OwnCloud is really good alternative to consider. Thanks!

or https://syncthing.net/ is free and decentralised

Yeah! I am a big fan of this tool. Actually, right now we are in the process of migration from our previous cloud solution to Syncthing. The reason to migrate is its simplicity and decentralization. Its decentralized nature can be confusing at first, but with good planning it gives much power, especially in environments with limited bandwidth (like in our case). ^__^